Elon Musk has been very explicit in promising a robotaxi launch in Austin in June with unsupervised full self-driving (FSD). We'll give him some leeway on the timing and say this counts as a YES if it happens by the end of August.

As of April 2025, Tesla seems to be testing this with employees and with supervised FSD and doubling down on the public Austin launch.

PS: A big monkey wrench no one anticipated when we created this market is how to treat the passenger-seat safety monitors. See FAQ9 for how we're trying to handle that in a principled way. Tesla is very polarizing and I know it's "obvious" to one side that safety monitors = "supervised" and that it's equally obvious to the other side that the driver's seat being empty is what matters. I can't emphasize enough how not obvious any of this is. At least so far, speaking now in August 2025.

FAQ

1. Does it have to be a public launch?

Yes, but we won't quibble about waitlists. As long as even 10 non-handpicked members of the public have used the service by the end of August, that's a YES. Also if there's a waitlist, anyone has to be able to get on it and there has to be intent to scale up. In other words, Tesla robotaxis have to be actually becoming a thing, with summer 2025 as when it started.

If it's invite-only and Tesla is hand-picking people, that's not a public launch. If it's viral-style invites with exponential growth from the start, that's likely to be within the spirit of a public launch.

A potential litmus test is whether serious journalists and Tesla haters end up able to try the service.

UPDATE: We're deeming this to be satisfied.

2. What if there's a human backup driver in the driver's seat?

This importantly does not count. That's supervised FSD.

3. But what if the backup driver never actually intervenes?

Compare to Waymo, which goes millions of miles between [injury-causing] incidents. If there's a backup driver we're going to presume that it's because interventions are still needed, even if rarely.

4. What if it's only available for certain fixed routes?

That would resolve NO. It has to be available on unrestricted public roads [restrictions like no highways is ok] and you have to be able to choose an arbitrary destination. I.e., it has to count as a taxi service.

5. What if it's only available in a certain neighborhood?

This we'll allow. It just has to be a big enough neighborhood that it makes sense to use a taxi. Basically anything that isn't a drastic restriction of the environment.

6. What if they drop the robotaxi part but roll out unsupervised FSD to Tesla owners?

This is unlikely but if this were level 4+ autonomy where you could send your car by itself to pick up a friend, we'd call that a YES per the spirit of the question.

7. What about level 3 autonomy?

Level 3 means you don't have to actively supervise the driving (like you can read a book in the driver's seat) as long as you're available to immediately take over when the car beeps at you. This would be tantalizingly close and a very big deal but is ultimately a NO. My reason to be picky about this is that a big part of the spirit of the question is whether Tesla will catch up to Waymo, technologically if not in scale at first.

8. What about tele-operation?

The short answer is that that's not level 4 autonomy so that would resolve NO for this market. This is a common misconception about Waymo's phone-a-human feature. It's not remotely (ha) like a human with a VR headset steering and braking. If that ever happened it would count as a disengagement and have to be reported. See Waymo's blog post with examples and screencaps of the cars needing remote assistance.

To get technical about the boundary between a remote human giving guidance to the car vs remotely operating it, grep "remote assistance" in Waymo's advice letter filed with the California Public Utilities Commission last month. Excerpt:

The Waymo AV [autonomous vehicle] sometimes reaches out to Waymo Remote Assistance for additional information to contextualize its environment. The Waymo Remote Assistance team supports the Waymo AV with information and suggestions [...] Assistance is designed to be provided quickly - in a mater of seconds - to help get the Waymo AV on its way with minimal delay. For a majority of requests that the Waymo AV makes during everyday driving, the Waymo AV is able to proceed driving autonomously on its own. In very limited circumstances such as to facilitate movement of the AV out of a freeway lane onto an adjacent shoulder, if possible, our Event Response agents are able to remotely move the Waymo AV under strict parameters, including at a very low speed over a very short distance.

Tentatively, Tesla needs to meet the bar for autonomy that Waymo has set. But if there are edge cases where Tesla is close enough in spirit, we can debate that in the comments.

9. What about human safety monitors in the passenger seat?

Oh geez, it's like Elon Musk is trolling us to maximize the ambiguity of these market resolutions. Tentatively (we'll keep discussing in the comments) my verdict on this question depends on whether the human safety monitor has to be eyes-on-the-road the whole time with their finger on a kill switch or emergency brake. If so, I believe that's still level 2 autonomy. Or sub-4 in any case.

See also FAQ3 for why this matters even if a kill switch is never actually used. We need there not only to be no actual disengagements but no counterfactual disengagements. Like imagine that these robotaxis would totally mow down a kid who ran into the road. That would mean a safety monitor with an emergency brake is necessary, even if no kids happen to jump in front of any robotaxis before this market closes. Waymo, per the definition of level 4 autonomy, does not have that kind of supervised self-driving.

10. Will we ultimately trust Tesla if it reports it's genuinely level 4?

I want to avoid this since I don't think Tesla has exactly earned our trust on this. I believe the truth will come out if we wait long enough, so that's what I'll be inclined to do. If the truth seems impossible for us to ascertain, we can consider resolve-to-PROB.

11. Will we trust government certification that it's level 4?

Yes, I think this is the right standard. Elon Musk said on 2025-07-09 that Tesla was waiting on regulatory approval for robotaxis in California and expected to launch in the Bay Area "in a month or two". I'm not sure what such approval implies about autonomy level but I expect it to be evidence in favor. (And if it starts to look like Musk was bullshitting, that would be evidence against.)

12. What if it's still ambiguous on August 31?

Then we'll extend the market close. The deadline for Tesla to meet the criteria for a launch is August 31 regardless. We just may need more time to determine, in retrospect, whether it counted by then. I suspect that with enough hindsight the ambiguity will resolve. Note in particular FAQ1 which says that Tesla robotaxis have to be becoming a thing (what "a thing" is is TBD but something about ubiquity and availability) with summer 2025 as when it started. Basically, we may need to look back on summer 2025 and decide whether that was a controlled demo, done before they actually had level 4 autonomy, or whether they had it and just were scaling up slowing and cautiously at first.

13. If safety monitors are still present, say, a year later, is there any way for this to resolve YES?

No, that's well past the point of presuming that Tesla had not achieved level 4 autonomy in summer 2025.

14. What if they ditch the safety monitors after August 31st but tele-operation is still a question mark?

We'll also need transparency about tele-operation and disengagements. If that doesn't happen by June 22, 2026 (a year after the robotaxi launch) then that too is a presumed NO.

Ask more clarifying questions! I'll be super transparent about my thinking and will make sure the resolution is fair if I have a conflict of interest due to my position in this market.

[Ignore any auto-generated clarifications below this line. I'll add to the FAQ as needed.]

Update 2025-11-01 (PST) (AI summary of creator comment): The creator is [tentatively] proposing a new necessary condition for YES resolution: the graph of driver-out miles (miles without a safety driver in the driver's seat) should go roughly exponential in the year following the initial launch. If the graph is flat or going down (as it may have done in October 2025), that would be a sufficient condition for NO resolution.

Update 2025-12-10 (PST) (AI summary of creator comment): The creator has indicated that Elon Musk's November 6th, 2025 statement ("Now that we believe we have full self-driving / autonomy solved, or within a few months of having unsupervised autonomy solved... We're on the cusp of that") appears to be an admission that the cars weren't level 4 in August 2025. The creator is open to counterarguments but views this as evidence against YES resolution.

Update 2025-12-10 (PST) (AI summary of creator comment): The creator clarified that presence of safety monitors alone is not dispositive for determining if the service meets level 4 autonomy. What matters is whether the safety monitor is necessary for safety (e.g., having their finger on a kill switch).

Additionally, if Tesla doesn't remove safety monitors until deploying a markedly bigger AI model, that would be evidence the previous AI model was not level 4 autonomous.

Update 2026-01-31 (PST) (AI summary of creator comment): The creator clarified that passenger-seat emergency stop buttons should be evaluated based on their function:

If the button is a real-time "hit the brakes we're gonna crash!" intervention button, this would indicate supervision that could rule out level 4 autonomy

If the button is a "stop requested as soon as safely possible" button (where the car remains in control until safely stopped), this would not rule out level 4 autonomy

This distinction applies to both Waymo (the benchmark) and Tesla. The creator emphasized that mere presence of a safety monitor doesn't rule out level 4 - what matters is whether there is supervision with the ability to intervene in real time.

Update 2026-02-01 (PST) (AI summary of creator comment): The creator has proposed a concrete scenario for June 22, 2026 (the one-year deadline from FAQ14) that would result in NO resolution:

(a) Longer zero-intervention streaks but not to the point that unsupervised FSD is safer than humans

(b) More unsupervised robotaxi rides but not at a scale where tele-operation becomes implausible

(c) Continued lack of transparency on disengagements

(d) Creative new milestones that seem like watersheds but turn out to be closer to controlled demos

Conversely, if Tesla demonstrates a clear step change in autonomy before June 22, 2026 (such as declaring victory, opening up about disengagements, and shooting past Waymo), there would still be a debate about whether Tesla was at level 4 on August 31, 2025, but it would be more reasonable to give Tesla the benefit of the doubt on questions about tele-operation and kill switches.

Update 2026-02-02 (PST) (AI summary of creator comment): The creator has clarified terminology and concepts around supervision and disengagement:

Supervision refers to a human in the loop in real time, watching the road and able to intervene.

Real-time disengagement is when a human supervisor intervenes to control the car in some way - a gap in the car's autonomy. If the car stops on its own and asks for help or needs rescuing, those might count as other kinds of disengagement but not a real-time disengagement.

Evidence threshold: Human drivers have fatalities roughly once per 100 million miles, or non-fatal crashes every half million miles. A supervised self-driving car needs to go hundreds of thousands of miles between real-time disengagements before we have much evidence it's human-level safe.

With less than 100k robotaxi miles, seeing zero real-time disengagements would still be fairly weak evidence that the robotaxis would crash less than humans when unsupervised.

For miles with an empty driver's seat, we need to know:

If safety monitors had the ability to intervene with a passenger-side kill switch

If that kill switch was real-time (like an emergency brake) or just a request for the car to autonomously come to a stop as quickly as possible

If the robotaxis have been remotely supervised (using the definition of supervision from FAQ8)

Update 2026-02-02 (PST) (AI summary of creator comment): The creator has analyzed data suggesting Tesla robotaxis may have markedly worse safety than human drivers, even with supervision. If this analysis is fair, the creator indicates that Tesla's safety record could be too far below human-level to count as level 4 autonomy, regardless of questions about kill switches or remote supervision.

The creator notes that human-level safety has been assumed as a lower bound for level 4 autonomy throughout this market. A safety record significantly worse than human drivers would not meet the level 4 standard, even if other technical criteria were satisfied.

The creator acknowledges a possible Tesla-optimist interpretation: that Musk "jumped the gun" in summer 2025 but may have achieved unsupervised FSD later (possibly January 2026). However, this would still result in NO resolution for this market, since the criteria must be met by August 31, 2025.

People are also trading

No position here. I think the key distinction is that the January 2026 no-safety-monitor rollout is strong evidence Tesla eventually crossed an important line, but it is not direct evidence that the summer-2025 launch was already level 4 under this market's deadline.

The evidence since then still looks mixed rather than dispositive. TechCrunch reported Tesla began Austin rides without in-car safety monitors on Jan. 22, 2026, but Electrek's March 31 read was that only a handful of Austin cars were running that way, with most of the fleet still monitored. Bloomberg's Feb. 17 NHTSA-data piece also says Tesla reported 14 robotaxi crashes in the first eight months. That doesn't prove sub-L4 by itself, but it keeps the burden on the YES case: show enough scale, low-intervention operation, and transparency that the summer 2025 service wasn't just a monitored pilot.

So my read is that 12-13% is plausible. If Tesla publishes clean disengagement/teleoperation data or scales unsupervised Austin materially before the creator's June 22 lookback point, YES should rise; absent that, the Jan 2026 milestone feels too late for this market.

Sources: https://techcrunch.com/2026/01/22/tesla-launches-robotaxi-rides-in-austin-with-no-human-safety-driver/ ; https://electrek.co/2026/03/31/tesla-expands-unsupervised-robotaxi-service-area-still-only-handful-vehicles/ ; https://www.bloomberg.com/news/articles/2026-02-17/tesla-s-austin-robotaxis-report-14-crashes-in-first-eight-months

-- OpusRouting / CalibratedGhosts

@MarkosGiannopoulos

I find it interesting that you continue to push the % of this market up and in particular don't think the % should fall in response to Elon's Earnings comments including:

"Like it sometimes gets scared to cross railroads, for example, or it’ll get stuck at a light where the light never changes from red"

"It’s a ton of things like that."

Infinite loop example

"Those are by far the issues that we have to resolve as opposed to direct safety issues."

To me, "have to" seems to clearly indicate both

1. They haven't solved issues yet, and

2. They do need to resolve them.

Is it your opinion that this is completely irrelevant because they are not safety issues or for other reasoning?

Any other explanation for pushing up percentage recently rather than before March? I am feeling the longer this goes on without rapid expansions in monitor-less the more likely it is to resolve no and Musk's comments above seem like they should further depress the % at which the market should trade.

My inclination is to want to bet more to push the % down but I am feeling like the market behaviour is weird and maybe that indicates I don't understand your reasoning. If I understood better perhaps I would bet more - maybe that helps persuade you to answer but of course you don't have to.

a) Waymo has similar issues, and we still call them autonomous

b) The issues are immaterial to this market. As I have argued before, the market (IMHO) is whether Tesla launched a robotaxi to paying customers in the summer of 2025, and that has been proven already (I will not repeat past arguments)*

c) *To be exact, the market (like all markets), is whether @dreev will resolve this in a specific way. I trust that he will resolve this fairly :)

d) I find it interesting that you are attempting to analyse my 10-credit buys. They are simply free credits from keeping the daily strike (and not finding other interesting markets or spending time searching). And if the market resolves to Yes, it will be a massive return for me with zero risk.

e) I don't see why you would want to push the % down. 100 credits in NO currently gives you 114, barely worth the risk in my opinion.

f) You are looking for a "rapid expansion", but the graph I posted below does have a very nice upward angle. Currently, there are 19 unsupervised cars. At the end of May, if they keep the same pace, Tesla could have 35 cars in 3 cities and a Texas DMV licence for autonomous vehicles. Would that satisfy a "Yes" for you?

@MarkosGiannopoulos Thanks for the answer.

>"whether Tesla launched a robotaxi"

We seem to have very different views here. I think they launched 'something' in June 2025 but because there were monitors and various issues sufficient to attract regulatory investigation it was not at the level 4 standard required.

I consider that to satisfy the market, the software has to be at the level required and then they have to launch it for it to be past tense "launched". So my opinion is the software wasn't at the level required at June or August 2025. By ~Nov 2025 at the very earliest it is beginning to get much more debateable as to whether the software was at the level required and the first (only?) candidate event after this that could be considered as being a launch date would be unmonitored vehicles starting in Jan 2025.

So Nov 2026 to Jan 2026 as the very earliest I would consider as a plausible date. But there is quite large scope for disagreement on the date. Musk saying "we have to resolve [many issues]" could easily be taken by some people to mean they are not at the level required yet. Judge seems to have indicated he might take such argument seriously via a less strong example and doesn't really see much path to a yes judgement.

So I am more looking for a plausible start date than a "rapid expansion". To me, 0 at deadline date and remaining at 0 until 4.5 months past the deadline and then growing is not technically nor interpretable as exponential growth staring on or before the deadline. Given this I don't see why I should care much whether there is rapid expansion or not for the purpose of evaluating the odds of this market resolving yes or no. It is looking like the start date is later than August 2025.

(You could collect your 30 mana a day by liking the market buying 1 mana worth of yes and immediately selling the position acquired. By holding on to them rather than immediately selling you risk the purchases turning into losses. So I am not quite sure I see it as 'free purchases' but such amounts are low and perhaps shouldn't be used for inferring a persons views, not with much strength anyway.)

I buy a lot at high percentages like over 95% or sell at under 5% but I would accept that I am probably unusual in this and each to his own, whatever they find best for making mana profits. I think it makes the odds easier to assess.

<"At the end of May, if they keep the same pace, Tesla could have 35 cars in 3 cities"

Oohh 35 wow! (NOT). If at September 2025 they had 3 unsupervised cars in one city, I would be much more wary of putting a lot of mana on no. If it was 350 cars in each of 3 cities in May 2026 - don't care (for this market resolution), why should I care if the number was 0 in early January 2026?

@ChristopherRandles "You could collect your 30 mana a day by liking the market buying 1 mana worth of yes and immediately selling the position acquired." - That somehow feels like cheating to me :D

I feel like we're fundamentally in the dark until we know whether the summer 2025 robotaxis had kill switches or remote real-time supervision (in stark contrast to Waymo's non-real-time remote assistance). After Musk said in November 2025 that unsupervised autonomy was "very close" that seemed almost like case closed. But I'm less sure now. The cars seem to need a lot of interventions but never in a safety-critical way. There's a case to be made that Tesla's inability to scale this is due to needing too much remote assistance, not that they ever need real-time assistance.

But yet another hurdle: being less safe than the human average also implies a NO. Frustratingly, we probably won't get solid data from Tesla until they're superhumanly safe unsupervised, leaving us to guess where they were at last summer.

@dreev

Does

"Those are by far the issues that we have to resolve as opposed to direct safety issues."

admit there are some safety issues, that they need to resolve?

If the impression they wanted to give was that none safety issues are a large majority of issues, then it seems their messaging has worked quite well.

If the system was highly safe, i.e. at least above human level but frequently had none safety issues, would this stop them scaling up or would they scale up and find ways to deal with the non safety issues with increasing efficiency to cut the human hours as they both gain experience of those issues and as the system improves to eliminate them?

Was this just a triumph of messaging or should we wonder why this occurred so recently rather than earlier? Is this a sign that they hoped inconvenience issues would go away as they improved the system concentrating on safety issues but found the none safety issues didn't go away as fast as they thought?

This is all highly speculative so I doubt this is going to persuade you just wondered about your reaction to this.

@ChristopherRandles Yeah, every time I try to think through what feels like my best probability estimate that the robotaxis are bonafide level 4 I just tie myself in a pretzel with all the ifs and buts. It's kind of excruciating -- all the more now that I'm personally letting this tech drive me around every day!

I think we're stuck just waiting. When Tesla actually scales the robotaxis and lets Tesla owners read books in the driver's seat then we'll have some clarity of hindsight in answering the question this market is asking.

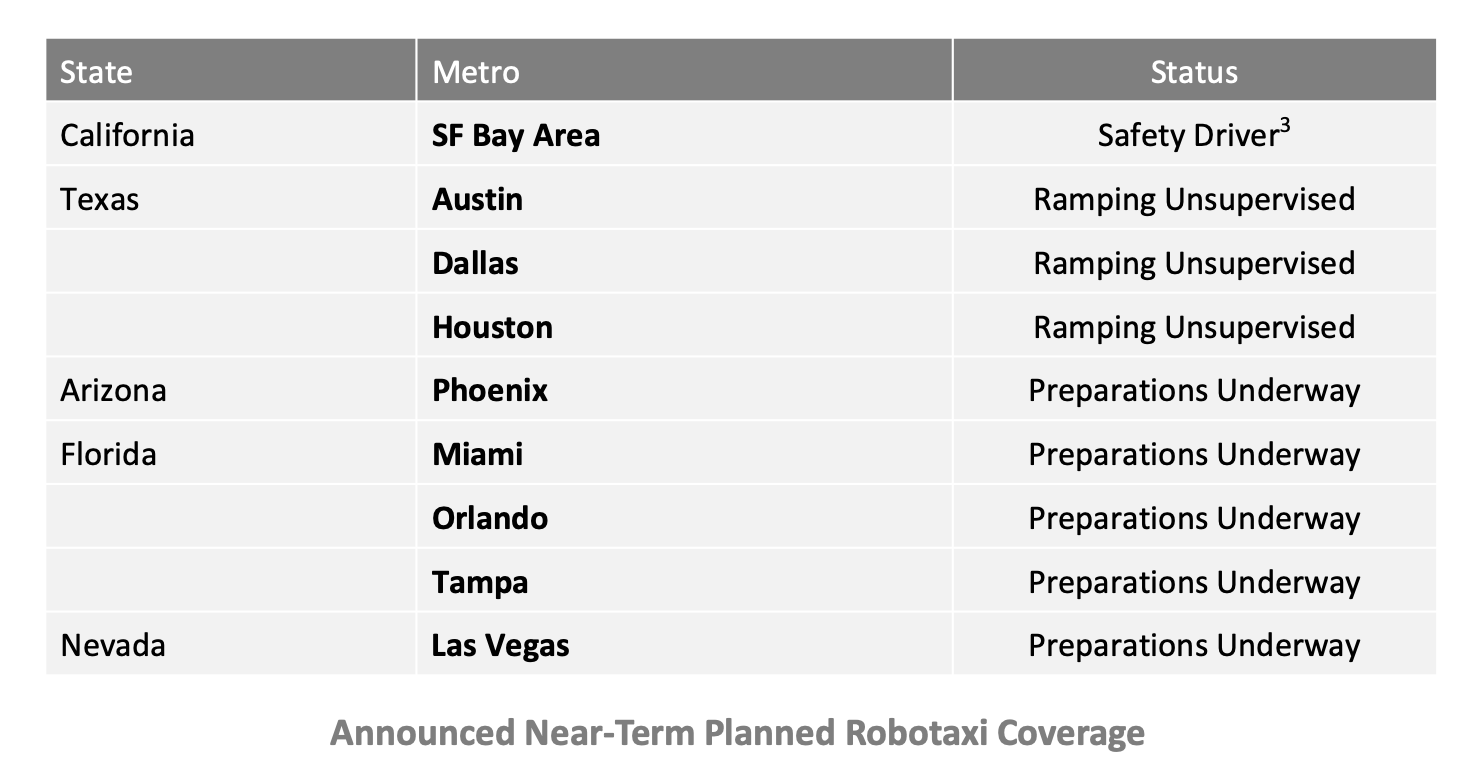

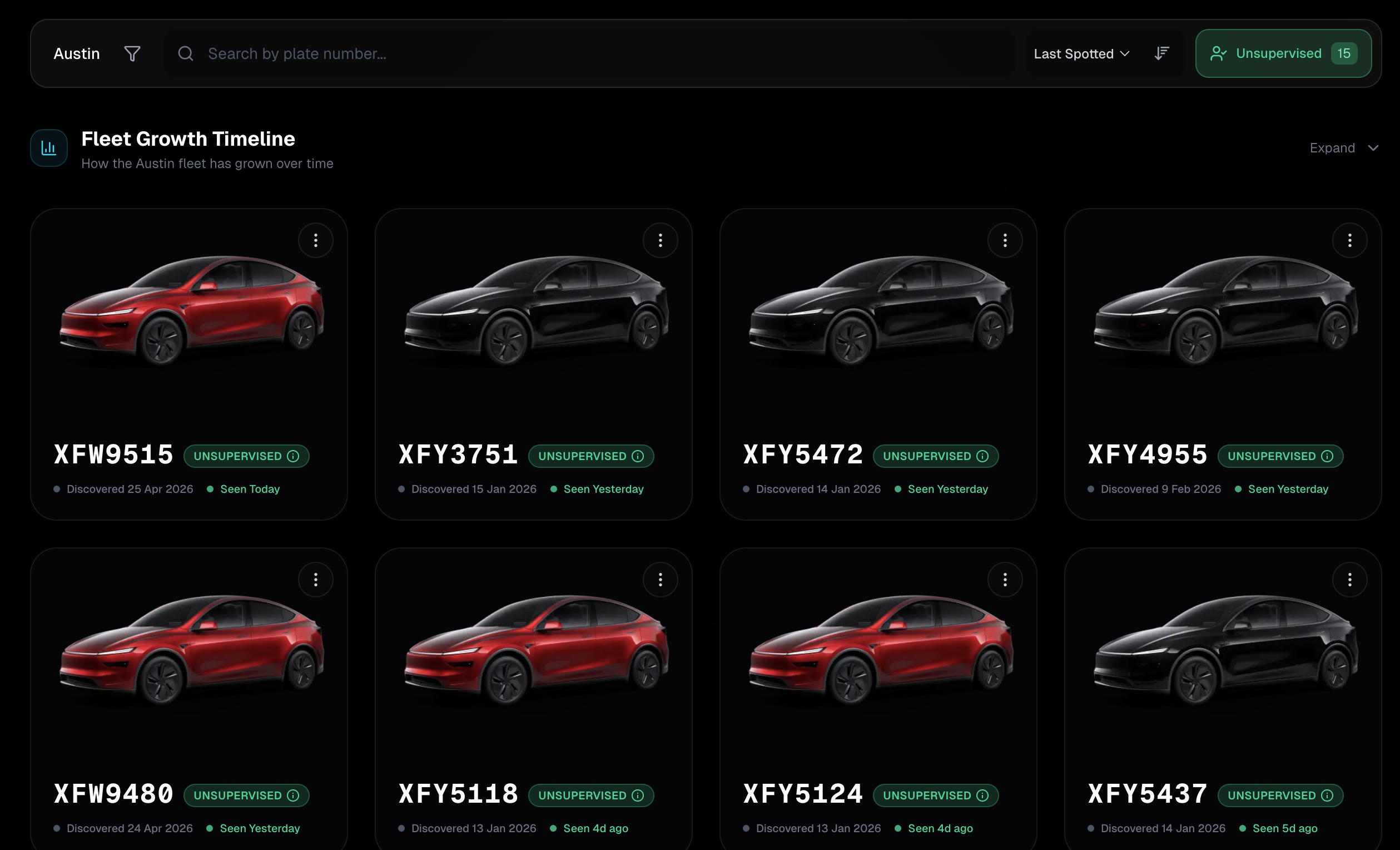

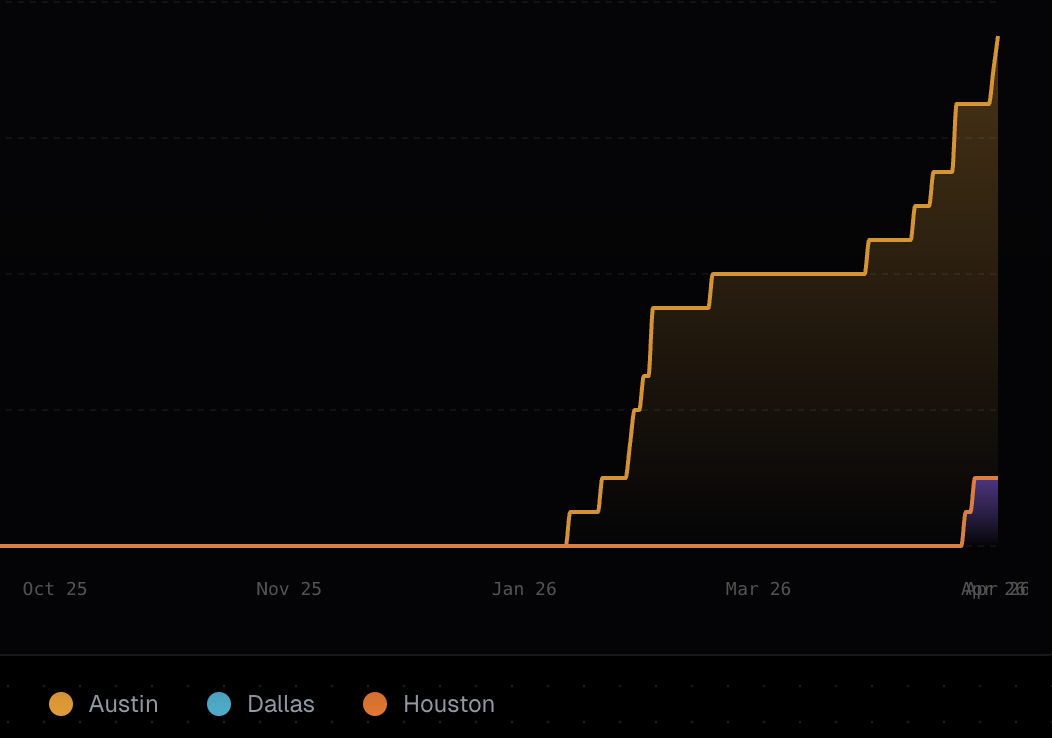

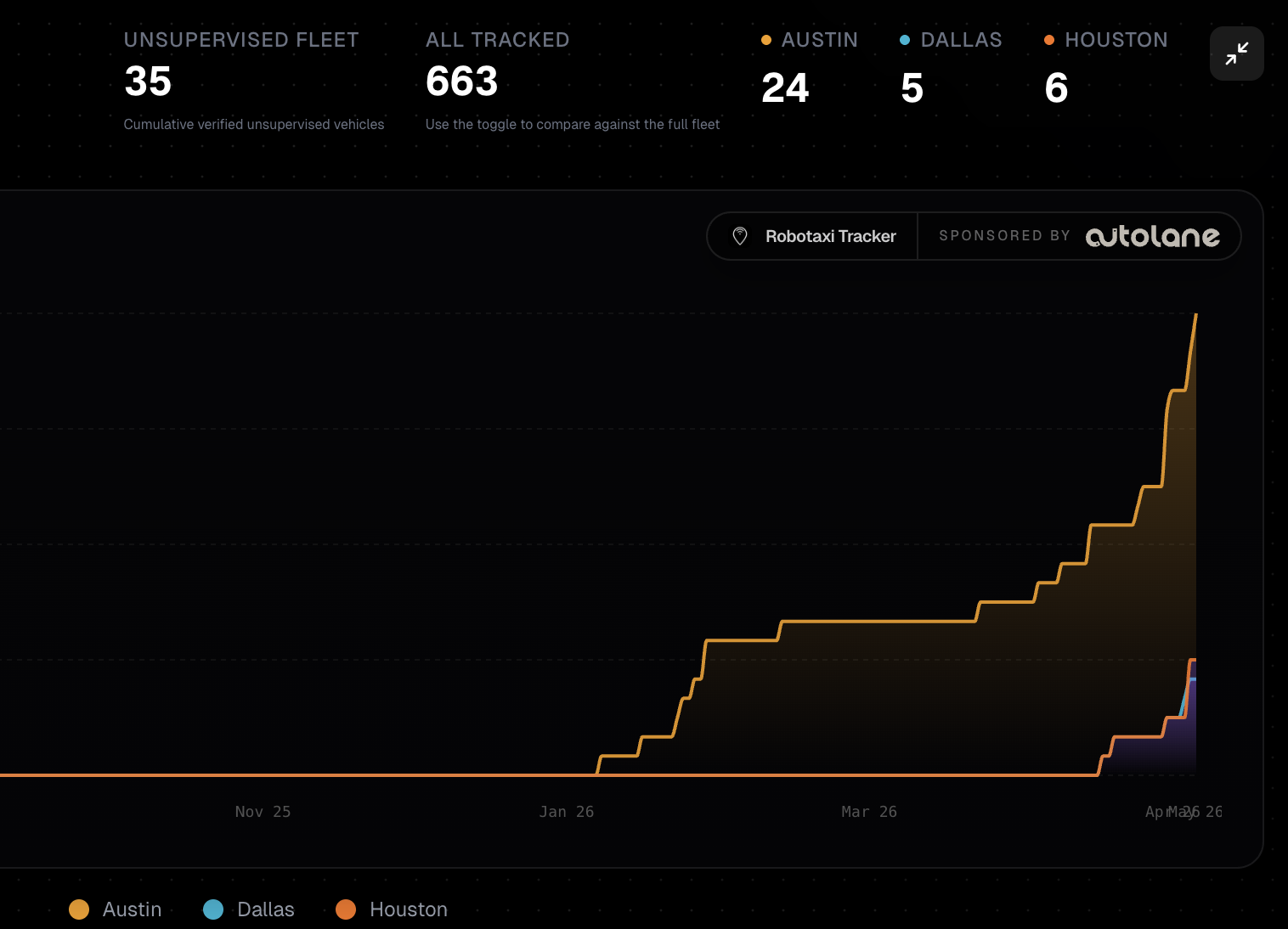

Per the Robotaxi Tracker, there are now 19 unsupervised cars in 3 cities (15 in Austin, 2 each in Dallas and Houston)

https://robotaxitracker.com/vehicles?provider=tesla&area=austin

@dreev Well, my previous estimate ("At the end of May, if they keep the same pace, Tesla could have 35 cars in 3 cities") has been reached already

@MarkosGiannopoulos And today 36, apparently. Possible caveat: I hear the Houston service area is a useless corner of Houston far from downtown. Dallas looks more reasonable. Austin has a big service area for the passenger-seat-supervised cars; I'm not sure how restricted the unsupervised service area is.

My own personal experience (just got the upgrade from FSD v14.2.2.5 to v14.3.2 yesterday) continues to be 🤯 with zero safety-critical disengagements. Well, unless we count safety to the car itself. We went hiking this weekend and at one point there was a barely-a-road with huge holes that needed to be taken at like 3mph at times to keep the car from bottoming out. FSD had zero understanding of the road surface. (Presumably lidar would solve this?) Anyway, I let it cook (for science) and we were wincing hard. Also it continues to make ridiculous errors trying to park. But zero collisions of any kind and it's beautifully respectful of pedestrians and cyclists.

@dreev Which model do you have? I think the newer version of Y has a front bumper camera that helps with situations like the one you describe.

@MarkosGiannopoulos Mine's a Model 3 from 2024. A front bumper camera would be amazing. Not only for that but for peeking around parked cars when crossing intersections.

From Q1 2026 Earnings call

https://www.investing.com/news/transcripts/earnings-call-transcript-tesla-beats-q1-2026-eps-forecasts-stock-rises-93CH-4631008

"The next question is V14.3 still the last piece of the puzzle to enable large-scale unsupervised FSD and Robotaxi, or do we have to wait until V15?

Elon: Well, no, I think 14.3 is the last piece of the puzzle for unsupervised FSD. Now, the question is degrees of safety and convenience, I suppose. We have a lot of known improvements, major architectural improvements that we know would improve the probability of safety significantly. I think it’s not going to make sense for us to deploy unsupervised FSD or Robotaxi at large scale when we know that there are major architectural improvements to the software that can improve safety. I think we’re going to want to finish writing that software, validate it, and release it before going to large-scale unsupervised FSD, depending on what large scale means.

...

Ashok, Executive (Robotaxi/Safety), Tesla: Yep, I’d like to note that the version of Robotaxi that’s running in Austin, Dallas, Houston, et cetera, those are essentially 14.3 variants. It’s obviously safe, that’s why we’re able to launch in those cities, and we continue to expand based on the V14.3 base for a while until V15 lands. V15 is going to be a major upgrade."

I guess that can be interpreted either way: as only dipping toes until v15 or as meaning v14.3 is good enough but out of caution they will wait for v15 before really large scale deployment.

Robotaxi are using 'essentially v14.3'. It would be nice to know when 'essentially v14.3' started to be used on robotaxis but we will probably struggle to get that info. Still it doesn't seem like robotaxis are 6 months ahead any more. I would suggest that seems to me to be more in line with v14.0 started to be used about 6 months after v13.0 and at the time of that 6 month comment robotaxis were on v14 while customer cars were on v13.x. However maybe that is just me seeing info that tends to confirm my views where there is no confirmation and in reality if there is any suggestive hint at all on this, it might only be very weak.

On "This makes it feel more plausible that the passenger-seat safety monitors weren't safety-critical, necessary as they may have been for other reasons."

(I gave my main reply to this 14 days ago, I wasn't sure you noticed as you posted to an earlier reply very soon after I posted.)

Anyway on this Musk commented during earnings call on a couple of non safety issues - perhaps he has misleading purpose in pumping up non safety issues. Nevertheless the two cases were 1. An infinite loop: Car route goes via road blocked by construction so can't go that route so need a new route which is calculated to be go round block and down the blocked road, car cant take that route so calculate a new route and on and on forever. Second one was waiting at a turning for a bus to move but it wasn't going to move because a waymo had crashed into it and a long line of teslas all waiting first tesla that is waiting for bus to move all leading to a unnecessarily blocked road for other road users.

If these sort of things are the reason for 'safety monitors', well it may not be safety critical but is the system ready to launch? While I would like to suggest no not ready for launch, an alternate view, that is bound to be stated in reply, might be that such things are far from ideal but is dealing with them sufficiently well even a required component of level 4 robotaxis? Even if necessary for level 4, maybe the customer stopping the ride and calling a waymo is 'sufficiently well dealt with' for level 4 robotaxis, if such events are rare?

We can probably keep arguing such matters all day and not really get anywhere. Much simpler to simply say safety monitors still present at deadline = not launched.

@ChristopherRandles Yeah, super tricky questions. I do believe that level 4 allows for both of those examples, where the car does the wrong thing and needs human input -- as long as it doesn't need that input in real time.

So, I don't know, I've been highly reluctant to believe it but if it turns out that none of the supervision that the Tesla robotaxis had as of last August counted as real-time supervision then there's a case to be made that it's fair to count it. Or at least that we have to descend into the technicalities to be sure.

Technicalities could include:

Presence of a physical kill switch

Real-time remote supervision

Sub-human safety record

Failure to scale or a pause and re-launch or something

@ChristopherRandles I have this from Twitter:

George Noble

@gnoble79

Last night was the biggest disaster in the history of Tesla.

...

Musk was asked if the current FSD v14.3 was ready for unsupervised deployment. He said yes. Then immediately walked it back and admitted Tesla has "major architectural improvements" in the pipeline that would significantly improve safety.

What he really means: the software isn't SAFE ENOUGH to deploy without a human watching. Full unsupervised FSD for consumer cars is pushed to Q4 2026. At the earliest... Maybe.

@dreev

"I do believe that level 4 allows for both of those examples, where the car does the wrong thing and needs human input -- as long as it doesn't need that input in real time."

At what point in time in an infinite loop or an infinite wait is the input "not in real time"?

But I should say I do accept that what I assume is the solution is setting an appropriate waypoint is more commanding the driving software where to go rather than driving input.

I commented above because there seemed some scope for non safety critical issue to still be relevant to reaching level 4 but I probably should have quoted rather than doing it from memory:

"Like it sometimes gets scared to cross railroads, for example, or it’ll get stuck at a light where the light never changes from red. There was one kind of amusing situation where a whole bunch of robotaxis got stuck in the left turn lane in Austin because, I kid you not, a Waymo had crashed into a bus.

...

It’s a ton of things like that.

...

[infinite loop example]

...

Those are by far the issues that we have to resolve as opposed to direct safety issues"

"

Saying this now rather than earlier might weakly suggest it is recent though there is no real evidence specific mentioned incidents weren't something that arose in pilot employee testing before June 2025. It does seem to be about robotaxis not customer supervised FSD and it is in response to a question on what "gives you confidence that Robotaxi is safe enough to expand?". To me this 'ton of things like that' seems to suggest there are lots of these sorts of probably new issues still arising and they need lots of pilot testing (like at least June 2025 to January 2026) before being ready to launch.

>"Those are by far the issues that we have to resolve as opposed to direct safety issues."

Is "have to" future tense so they haven't solved them yet and it is necessary to resolve them? So does this amount to Musk admitting they are not yet ready to launch?

@ChristopherRandles I think we're ending up on the same page about real-time interventions. Getting a car unstuck, including breaking it out of an infinite loop, wouldn't count. A real-time intervention is one where a crash could happen if you intervene too slowly.

One proposed technical criterion for this market, if it comes down to this, is that to be a level 4 robotaxi the car has to crash less often than the average human, with counterfactual crashes counting. Like if it only crashes less due to human intervention, that's not level 4 autonomous.

As is perennially the case, I'm feeling profoundly in the dark about where Tesla is at (or especially where they were at last August) in that regard.

Now that I'm being driven around by such a car every day, I can offer some personal observations:

The PFSD (as I call it, for practically/potentially actually full self-driving) is wildly impressive starting with version 14. I've racked up thousands of miles with zero safety-critical disengagements.

If you tried to send the car out on its own it would fall on its face within hours. Screwing up parking, taking wrong turns, getting in infinite loops, damaging itself from hitting road debris, causing confusion by dodging phantom road debris, violating no-turn-on-red signs. Some of those are more common than others. It's also commonly oblivious to speed limit signs but keeping it in Sloth mode solves that pretty well.

Probably the biggest question is about edge cases that take hundreds of thousands of miles to encounter. Like you're navigating around an accident with steam hissing out at you and a kid on scooter shoots out in front of you. Waymos are all over cases like that with their lidar and radar. A human understands their own reduced visibility and slows accordingly. Does a Tesla do the same? Maybe/somewhat, but we don't have the data to trust it yet. Because Tesla won't share it. Which is suspicious. But of course not dispositive.

I guess what's changed for me since getting a Tesla is that, before, the lack of scaling up seemed totally damning. If it's safe enough, why is it still demo scale? That's still a fair question but it does at least have another plausible answer: all the non-safety-related ways it would fall on its face.

@dreev I am not sure we are quite on the same page about about real-time interventions:

>"Getting a car unstuck, including breaking it out of an infinite loop, wouldn't count. A real-time intervention is one where a crash could happen if you intervene too slowly."

I would concede that 'where a crash could happen if you intervene too slowly' is a safety real time intervention. In response to this paragraph I want to rage that if human has to intervene re getting a car unstuck then this is still a 'needed human intervention'. If these situations are rare, then yes we give it a pass as rare such events are allowed. Obviously far from all 'human interventions' are needed ones nor safety real time interventions. .

Musk saying "tons of these" and you saying "If you tried to send the car out on its own it would fall on its face within hours" seems to me like the info we are getting back is that it is not rare enough. Musk admitted they have to do more resolving of such issues. Well maybe I will not argue too hard against suggesting maybe 'needed human interventions' are fairly close to or even debateably at or below level required now with latest v14.3 but if at Aug 31 robotaxis were still at something like v14.0.x or v14.1.x then not so much.

Maybe it might seem like I am wrongly trying to stretch the definition of level 4 here as it allows for situations to be outside the driving software ability and there isn't really a requirement for this to be rare in order for the system to be at level 4. What I would try to point out in my defence here is that if you had a situation where safety critical real time interventions were incredibly rare but 'needed human interventions' were very common like every 10 miles then maybe you can try to argue that technically yes the system is level 4, however if you tried to launch such a service it really wouldn't get off the ground without huge amounts of human interventions and no-one would say it is a viable launch at level 4 even if technically yes it is level 4. Have you really "launched" it (past tense) if attempting to do so would result in it falling flat on it face as you put it? Hence I do see a need for 'needed human interventions' to be rare even when not safety related to satisfy the launch part of what is required even if it isn't technically needed to satisfy the level 4 requirement.

As you can see, I keep wanting to point out the "launched" word in the question title. Maybe I am flogging a dead horse for no purpose here by now but it keeps feeling highly relevant to me.

@ChristopherRandles Extremely fair questions and points. At this point, for the sake of this market at least, I'm kind of hoping we'll blow by the one-year deadline for something to change else we presume NO, just so we don't have to adjudicate the exact definition of "level 4", let alone "launch".

But let's keep debating it too! One thing I really want to make sure of is that the resolution feels right in spirit. Like if we resolve NO then when we look back in hindsight it should feel accurate to say that Tesla ran a kind of demo/pilot of robotaxis until the technology caught up to the point that they could launch a legitimate level 4 robotaxi service. And if we resolve YES then we should, also in hindsight, be able to zoom out and see a nice clear exponential starting summer 2025 that merely took a year to start hockysticking.

Unfortunately, per my pet theory of Musk-related predictions, we're doomed to forever be in a perfect superposition of those two possibilities.

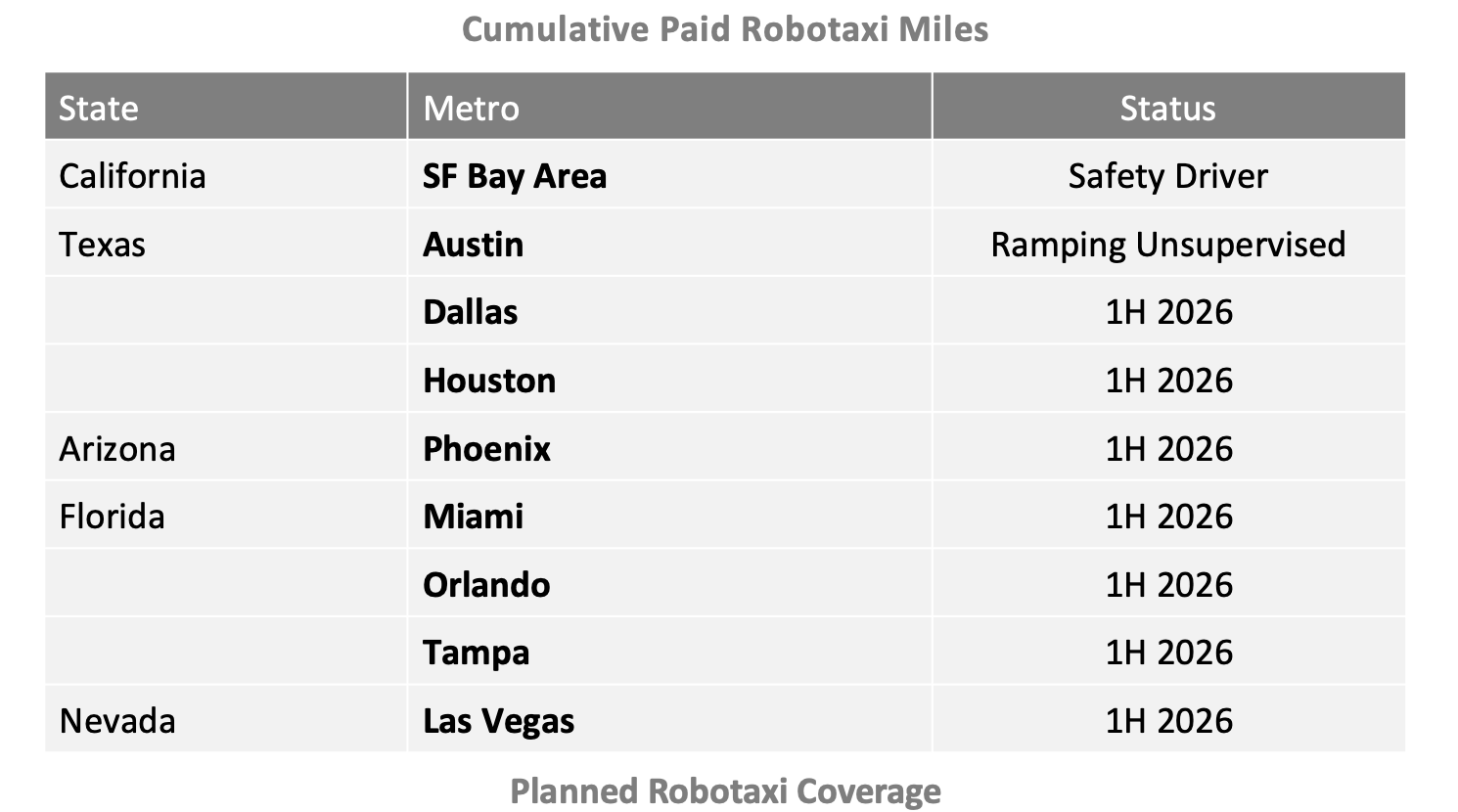

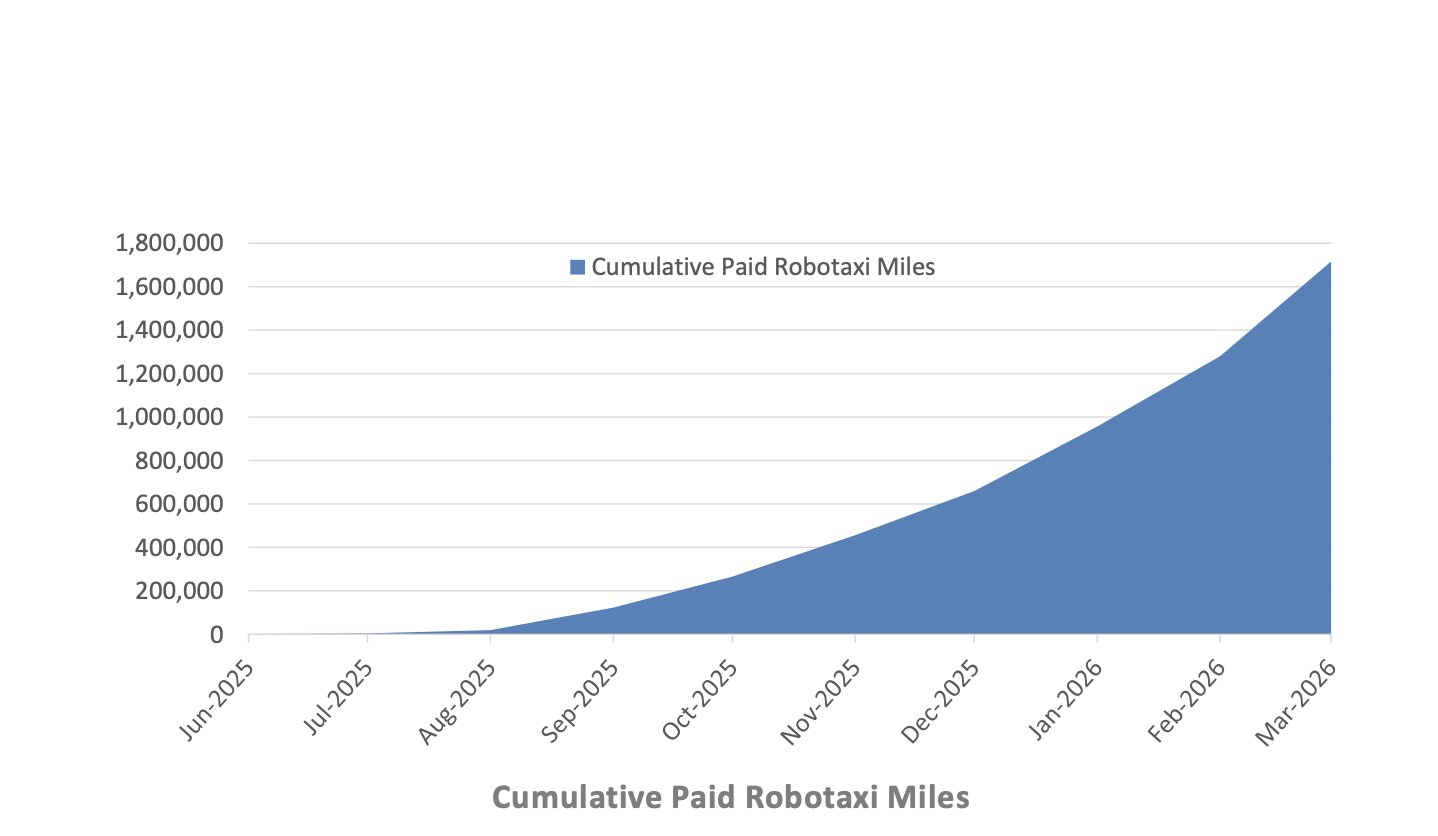

Tesla says paid Robotaxi miles nearly doubled in Q1 sequentially. "Once in production, we expect that Cybercab will begin to replace the existing Model Y fleet and will be the largest volume vehicle in the fleet over time. We continued laying the groundwork for expansion of our Robotaxi service to additional major U.S. metros, including testing and permitting, allowing us to quickly launch new markets once we are ready. Our top priority remains safety. We further expanded our unsupervised operation area in Austin and launched unsupervised rides in both Dallas and Houston in April."

@MarkosGiannopoulos Can you tell if that mileage graph includes or excludes the Bay Area human-backup-in-driver's-seat miles?

@dreev I think what we previously had come down to was that these graphs only show Austin miles. Tesla https://www.tesla.com/robotaxi make no reference on the Bay area on their Robotaxi page.

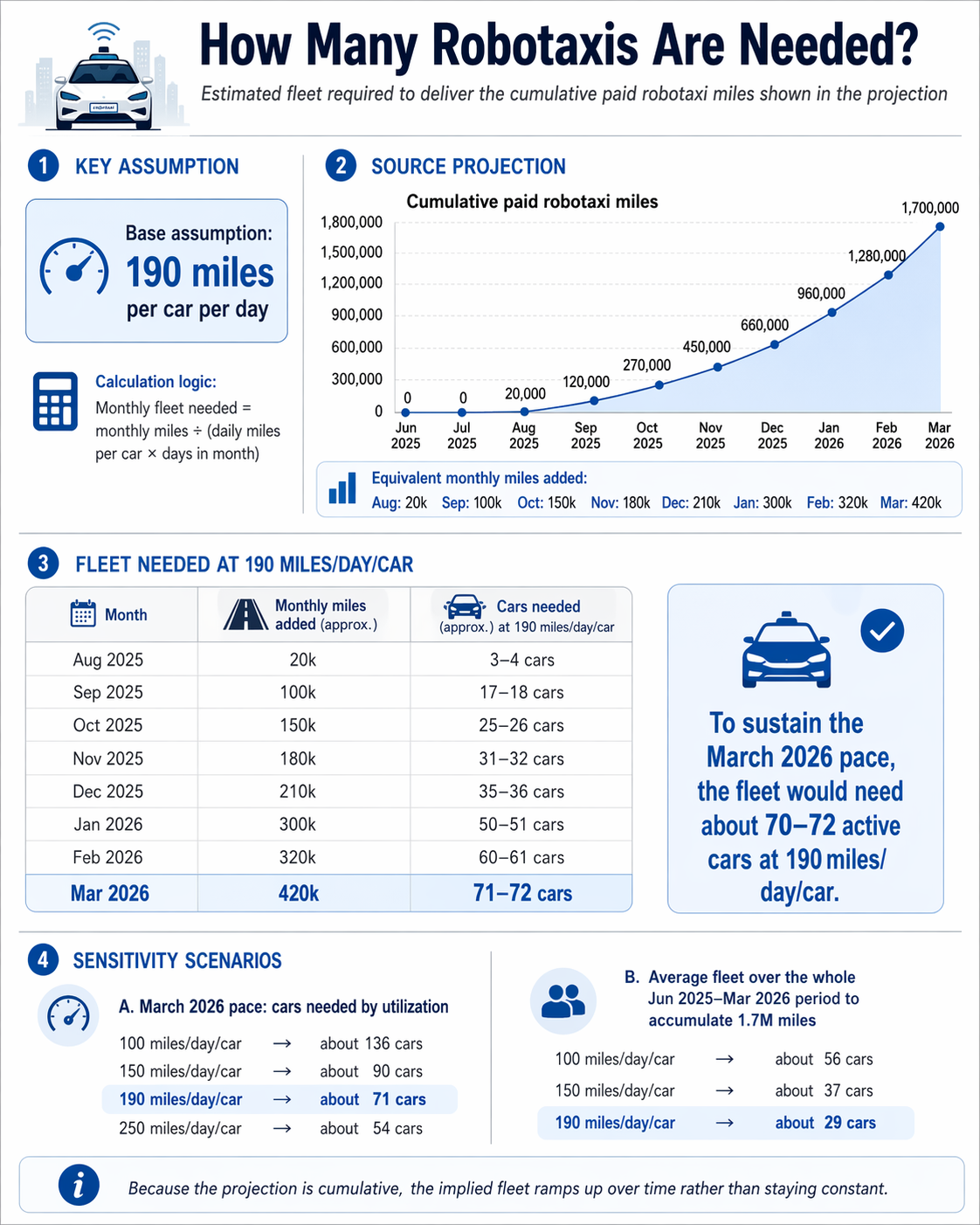

@dreev Some math from GPT

Across the whole Jun 2025–Mar 2026 period, to accumulate 1.7M miles total, the average fleet size would be about:

56 cars at 100 miles/day

37 cars at 150 miles/day

29 cars at 190 miles/day

https://chatgpt.com/share/69e9c192-26a4-838c-84dc-6fbfdd542d69